This is the second blog post in a two-part series examining test automation software. This blog post focuses on lessons learned for finding the right software product for your organization. We recommend you also read our first post, which is dedicated to understanding the process for moving from manual to automated testing.

We recently had the opportunity to review the current landscape of test automation software to reduce manual testing for a custom-built website involving a wide range of features and dynamic content.

Our Approach

As background, you may already know that Selenium WebDriver has the highest market share of all automated testing tools. Selenium is specifically designed for browser-based automated testing. The Selenium toolkit includes WebDriver, which is used to develop test scripts with the most frequently used programming languages.

We ran a few basic tests using Selenium with Python to understand the effort and skills required. After a short period, we decided that products in this research must meet or exceed the core functionality of Selenium but offer greater ease of use.

Next, we networked internally and put together a shortlist of test automation software to review. We collected information about each product's user interface and usability, intelligent features, plug-ins, pricing, training, and support features. Our test scenario utilized a typical webpage with everyday objects like date choosers, checkboxes, buttons, drop lists, data entry forms, hidden elements, and data grids with search results.

We continued our review by looking at software offerings with licensing costs in the mid-range, a relatively common price point, running between $5,000 to $10,000 per instance. We also looked at products in the lower ranges, up to $5,000 per instance, to see what features dropped off.

We closed our review of paid software by reviewing robotic process automation (RPA) solutions that are designed for use across the enterprise. After our review of paid software was complete, we compared their performance with Selenium IDE, a lightweight, open-source tool for creating and running tests.

What We Learned

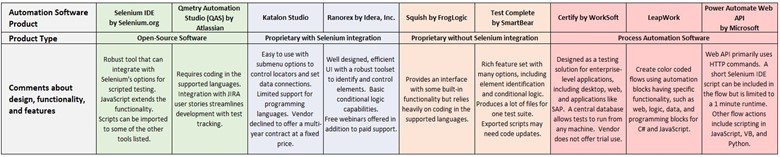

We tested nine automation software products to better understand their functionality. We compiled a comprehensive product matrix that compared each offering across 17 comparison criteria. Our unique analysis provides actionable insights that differentiate some of the most popular software automation tools.

Below you can find our summary results for the nine products (see Figure 1) and for a full comparison matrix of the products, please visit this resource. If you have questions about the comparison matrix, please reach out to our Managed Services group.

Figure 1. Test and Task Automation Software Review Summary

Our research into testing automation software resulted in many lessons learned. We synthesized our findings into five key takeaways that may help your enterprise pick the right automation software product.

Lesson 1: All of the products we tested met our functional criteria, regardless of price.

Overall, the key differentiators between products reflected the complexity of their architecture, supported code, the design of their interface, and general usability.

Similarity: Core Functionality

All products met the core requirement to identify web elements accurately and consistently. Some at the higher end offerings did so particularly well, or were able to compare visual objects and pull the underlying data from data visuals. All products stored the location of web elements within a data store and included options to give the object a friendly name and to change the method for locating them.

All products fetched data from external files for testing. Products at the higher end supported a wider range of data connections and had more options to manipulate the data. We found that the steps to transform data within the test made it run more slowly, impacting the test’s run time. During our evaluation, we found that using Excel's Power Query to transform data was preferable to relying purely on the products.

Similarity: Exports and Scripting

Most products had a feature to export tests to scripts in one or more programming languages, and one could both import and export test scripts. We checked this script conversion feature and found the imported Python file ran in the application, but the exported script used legacy code that would take a lot of time to update.

Interestingly, all products we reviewed integrate with JIRA for issues management, Jenkins and other CI/CD tools, and Zephyr for test management. The highest-priced enterprise solution also supported SAP GUIs.

Difference: On premises vs. Cloud

As we mentioned above, the key differences between the product tiers were mainly around the architecture, user interface and design, and ability to edit the test files using a programming language. Products at the top end are designed to serve as enterprise-wide solutions that can be hosted on the cloud or located on premises. There may be three or more machine tiers to support a web client for the test designer, a test runtime engine, virtual machines for parallel testing, and a database server. These products also have a user management system to set access to features and files.

Products at the middle to lower end of the price range are usually installed applications and use a separate license governor to control user access. Test files could be opened and edited using the supported programming language. Curiously, we found portability of test files decreased as the price increased.

Difference: User Interface and Usability

The user interface (UI) for most products in the mid-to-high range allowed you to drag & drop commands, test steps, and elements to create test cases quickly. These products also had sophisticated “spy tools” to locate elements on the web page. They produced colorful, graphical reports from test logs with toggles to show the test branches and corresponding lines of code that made it easy to find steps that failed and pinpoint issues. They also captured screens to provide a visual of the site’s behavior.

Products at the lower end of the range were not much different functionally, with a simpler interface and an adequate method of locating web elements. They had fewer types of test reports, but most had at least one data visual showing test success/failure with screen captures.

Lesson 2: Automated testing doesn’t change the team’s roles.

By design, automated test software makes testers more effective by allowing them to quickly find issues that point to code errors. Finding code errors alone moves a manual tester toward the role of a test engineer; we were interested in “low-code” and “codeless” products that can make testing accessible to a wider range of roles.

Knowledge of Code Still Required

Our research found that products are marketed using roles like test ‘designers’ or ‘creators’ instead of more traditional roles, like analysts and engineers. The product demonstrations tended to focus on how easy it was to move objects within the interface, like a canvas. Product reps emphasized that – with training – anyone could create tests without coding. However, we found that in all cases, we were led more toward the underlying test code than away from it.

Our findings showed that an understanding of code is necessary to develop tests. At a very basic level, a test analyst must understand the CSS and HTML code and how to locate web site elements on a page, including accessing dynamic data. A tester with a higher skill level understands how to set up the logical conditions for testing and how to write the commands and the proper order of steps. As a tester’s skills progress, they can use formula and expressions, manage data connections, and read and interpret the test logs. Advanced testers would be able to generate data through code, set up repeatable loops and run tests in parallel, and construct tests using randomized values. These higher level skills are in the wheelhouse of a test engineer.

Well-Rounded Teams Get to Automation

We concluded that a senior test engineer or test architect is needed to drive the conversion from manual to automated testing and support the process end-to-end. Cross-functional teams should be involved in product selection, defining the test strategy, and working through the system and technical requirements. Once the system is set up, a test engineer is needed to develop tests and provide team support and coordination.

Lesson 3: The rising demand for automation is also being served by open-source software.

In addition to Selenium’s significant market share in the automated test product space, many products across the pricing spectrum leverage Selenium’s WebDriver engine within their application’s framework. Using software and tools like Selenium, that are open source and available at no cost, provides a substantial savings compared to paid software. The mid-range pricing for products we reviewed would require spending around $10k as an upfront investment for a single license, with annual support costs running between 25-35% of the license fee and training as an additional cost. A bit humbled by these figures, we returned to our review of Selenium and set up a pilot using the Selenium Integrated Development Environment (IDE).

Selenium IDE is a free browser extension that allows you to create test cases and organize them into test suites using a basic interface. It has a recorder tool to locate elements using CSS, XPath, and a couple other locators that were accurate and consistent. The Selenese command set (Selenium’s language) includes the Run statement that is used with a test’s name as its target. The Run statement makes it easy to stack test cases so they can be run together, in any order. The Times statement allows you to repeat a test any number of times, such as for load testing.

We used Selenium IDE to create tests with conditional logic as we had with the other products and found no gaps in command functionality. We performed data-driven testing using Selenium’s Execute Script command with a JavaScript statement to generate numbers, dates, and strings to validate data on the site. The script command was also used to perform math to check calculated data, and stat functions to create random data for testing. The IDE returns a lightweight JSON file that can be edited with a plain text editor like Notepad or a code editor like VS Code. One drawback is the IDE doesn’t have an easy way to capture screens, but that might be resolved using a plug-in or JS code.

Overall, we were pleased to find that we can use Selenium IDE to increase test coverage for the investment of our time.

Lesson 4: Build capacity for automated testing internally and recognize advancement of skill.

The vendor-provided demonstrations were helpful for a quick intro to the product’s navigation and features. After that, we found that the free trials and resources available online were enough to become comfortable with the different testing tools. Some of the product vendors offer online training at no cost that can help to advance your understanding of the product.

Other vendors offered to create tests using our site, which was not an option for us. Before considering vendor-provided training, we advise consulting your technology policies and security officers with consideration for protecting your intellectual property and sensitive information.

With so many factors to be considered – the design of the web site to be tested, data needed for testing, machine configuration, coding, tools – conversion from manual to automated testing involves much learning. As we conducted research during our review, we compiled resources and information that is appropriate for different testing roles and levels.

We recommend forming a small core of experts to implement automated testing and to train analysts or teams to use them. Each person on the core team should bring different skills and strengths that cover the technical, operational, and team considerations for adopting test automation. Doing so can help generate buy-in from your stakeholders as you right-size the effort for your organization.

Lesson 5: Determining the right-size of test automation depends on your organization’s priorities, project requirements, resources, and culture for change.

As we described in our first blog, adopting test automation first depends on identifying the projects that would benefit the most from automation. We recommend having your project leads work with your test engineer and determine the overall project to test, followed by identifying specific features to be tested, and the types of tests to be performed.

We recommend allowing time to go through a discovery process to find the issues and risks to success for implementing automated testing. Using open-source software for the evaluation phase can help you to work through issues without incurring licensing costs. If you want to opt for paid software, consider having your test engineer request a trial of one product to learn how it works. Use this experience to refine your testing product criteria before reviewing other products.

Define a few metrics that you can use for your evaluation and as test automation progresses. If you are planning to use paid software, gather the costs for licensing, machines, training, and annual support while in the trial phase. Have your test engineer and analysts track their time to build and run tests, and to provide documentation and training. Identify where issues were found and what kind of issues. This information can be used to help refine your budget for testing software, and it can be used as benchmarks for comparison over time.

Moving from manual to automated processes requires everyone’s willingness and involvement. Test engineers should be transparent when issues are encountered and how they were overcome. Testers need to fully participate in the new process, actively learn, and provide constructive feedback. Together, these actions will help to create a culture of support for automation.

Conclusion

In conclusion, getting the right fit for test and task automation depends on your needs, willingness to invest, and the people and resources to support it. The lower end of the product spectrum may be the right fit if you have smaller projects and limited staffing and funds. If you have larger projects but a small test bench, investing in a mid-priced product could be the right approach, using staffing and resources strategically. Consider open-source tools if you have a test engineer with coding experience and test analysts that are willing to help shape the process and acquire new skills.

Test and task automation is a growing market, with new offerings and technology breakthroughs every year. AEM can help your organization navigate this expanding market. If you are interested in learning more, read our blog post on how to successfully automate tests to explore the space further. Please reach out to our team to learn more about our service offerings.