Tuning SQL statements and databases can be intimidating to Database Developers and DBAs alike. If you take too long, say something is “good enough,” or declare that something cannot be fixed, it may hurt your reputation or even have you looking for a new employer! But if done well, the benefits of resolving a performance issue range from helping your coworker to improving the lives of the operational community.

When tuning SQL statements and databases, utilize your experience and research skills. Avoid caving to pressure by simplifying problems to identify solutions. Knowing a few reliable strategies can help ensure success when addressing database-wide performance issues or troubleshooting a delete statement taking much longer than expected.

To help tune your skills, we have drawn from an example from our work. In this scenario (with some modification), a developer or DBA is resolving a legacy process that moves data from one database to another hosted on two separate servers. We walk you through the process we followed.

The Tuning Scenario

In this scenario, the process requires all data from the target partition to be deleted prior to refreshing the data. While that sounds simple, over one hundred partitioned tables are involved, and the process must be executed ON-DEMAND while the partitioned tables are accessed. What’s more, constraints and triggers cannot be disabled to avoid data integrity issues.

The tuning process must be executed in the background without any hang-ups to minimize operational impact. This process must be completed in minutes, not hours. After some detailed analysis and trying several fixes from the toolbox, we want to reduce the deletion time from 13 hours to seven minutes. Let’s look at how we can accomplish this feat.

Tuning the DELETE:

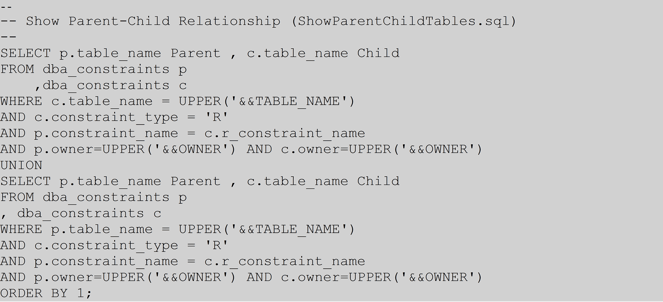

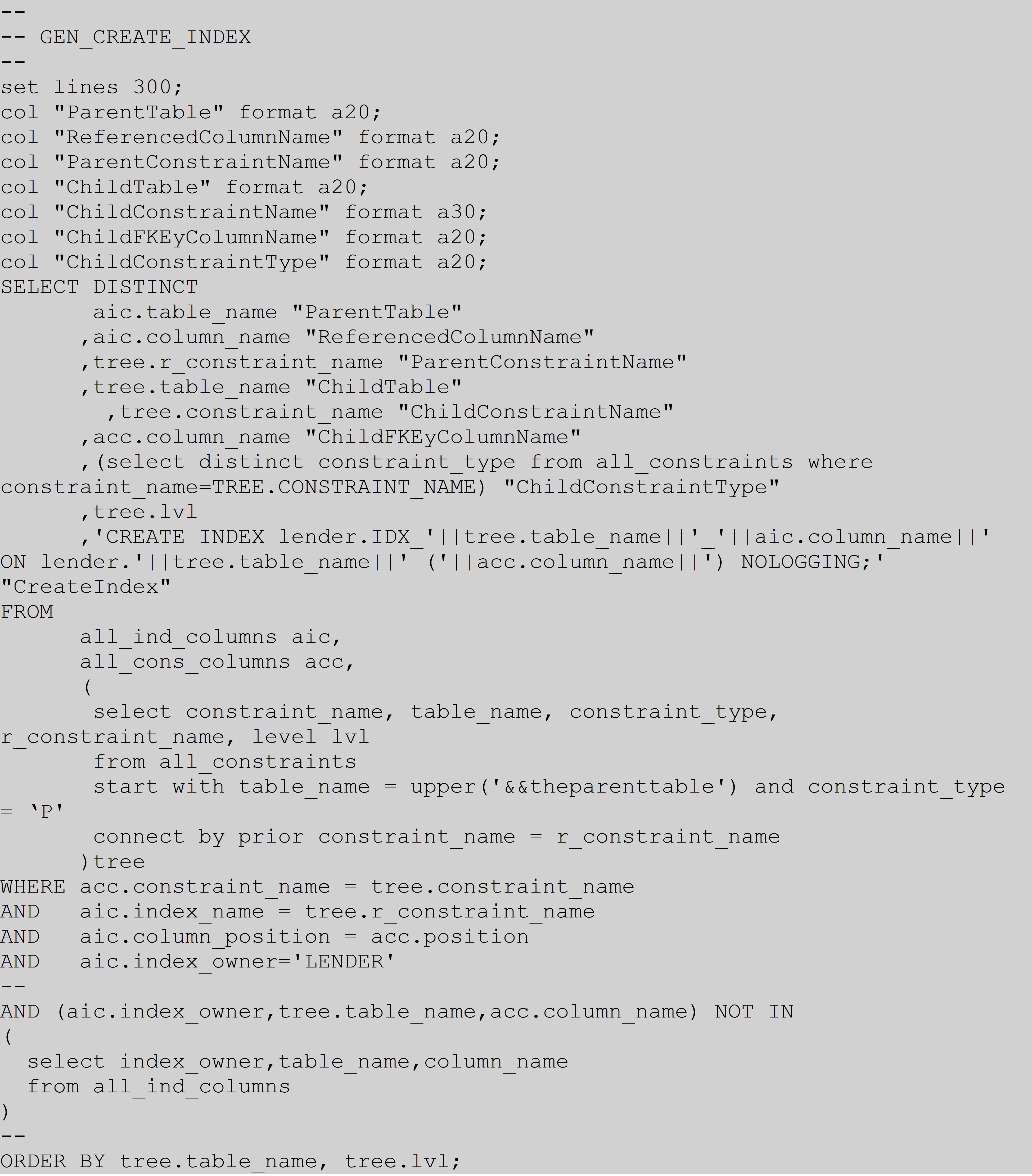

The first step is to identify the parent-child relationship for each of the tables, allowing you to construct a referentially correct DELETE order. Below is the script developed to determine the relationship where you will be prompted for the table and schema (owner) information:

The above script will produce the output below:

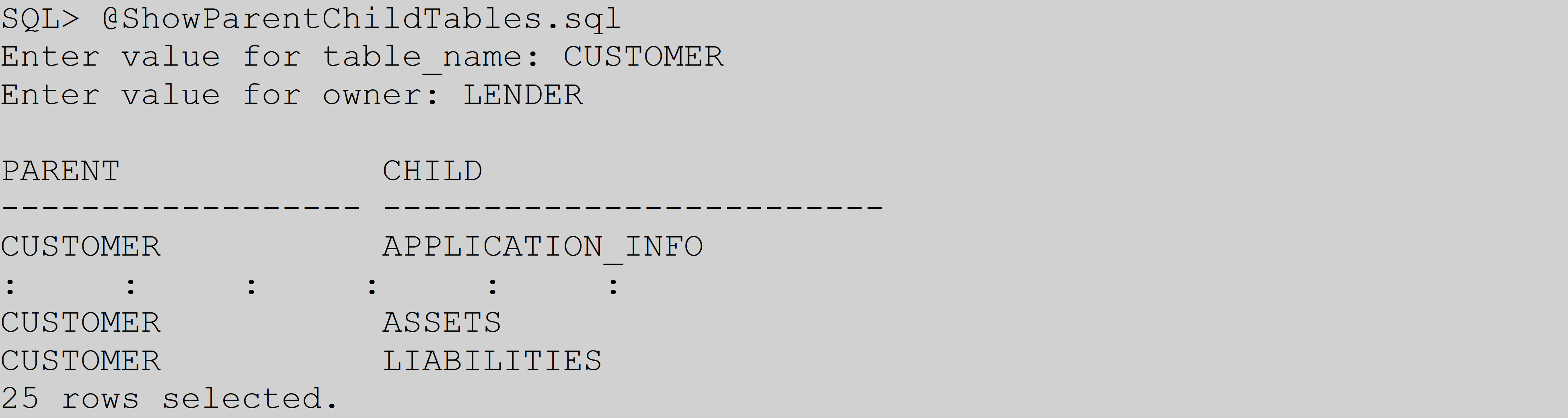

In this example, we have based the table partitions on the two-letter state abbreviation codes (for example, CA = California). With the parent-child relationship in hand, the referentially correct delete order is constructed as follows:

Understanding the Tuning Scenario:

Question: Why did it take 13 hours to delete the CUSTOMER table?

Theoretically, it should be a quick DELETE because we emptied all of the child tables. After trying several relatively simple solutions, such as TRUNCATE, PARALLEL DML, gathering stats, rebuilding indexes, moving tables to a different tablespace, and moving to a different partition, nothing seemed to improve the performance. It’s good to rule out the more obvious solutions with troubleshooting performance issues before exploring more complex alternatives. Once the “low hanging fruit” was out of the way, it became clear that the dependent tables also have partitions, so perhaps they were contributing to the performance impact.

Answer: During the DELETE process, the DELETE will hop to the child tables and check for dependent data across all of the state partitions. The DELETE process is effectively doing a full table scan across all dependent tables across state partitions because there are no indexes on these associated columns. This process is a considerable performance hit.

Adding an index to the child tables prevents full table scans from happening, using the script below. You will need to change the “lender” (schema) in the script to the schema/owner along with providing the parent table requiring the delete (command prompts you for this with &&theparenttable):

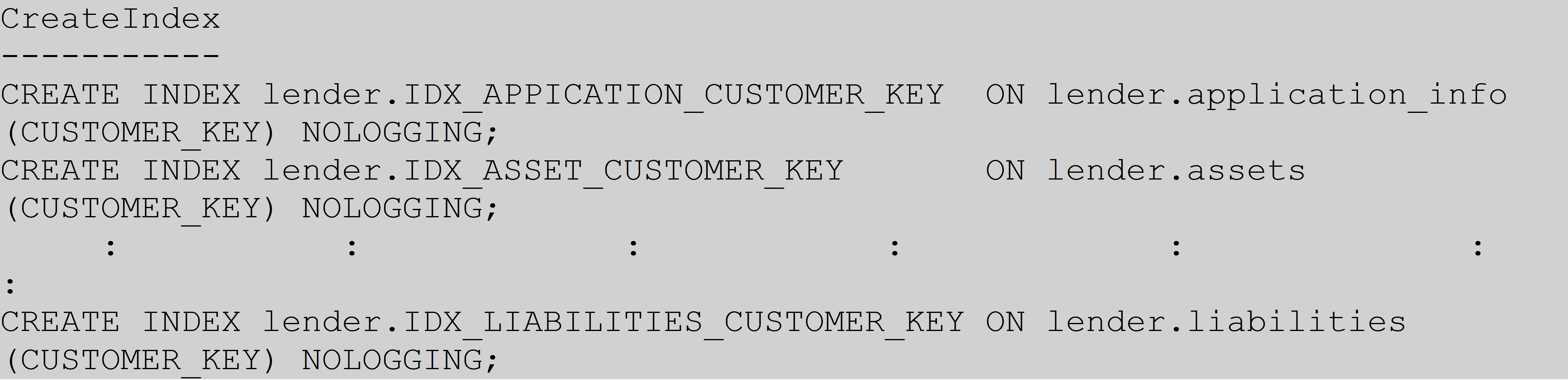

The script generates the necessary CREATE INDEX:

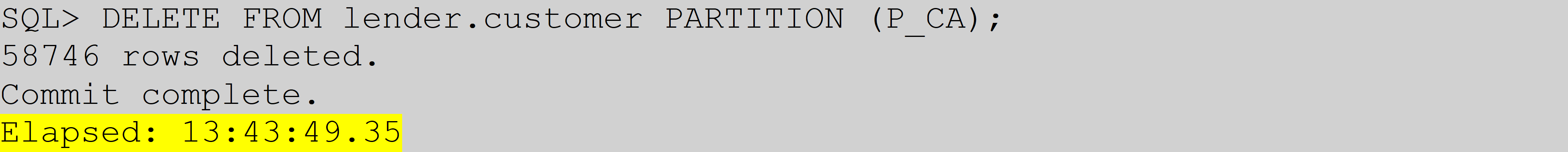

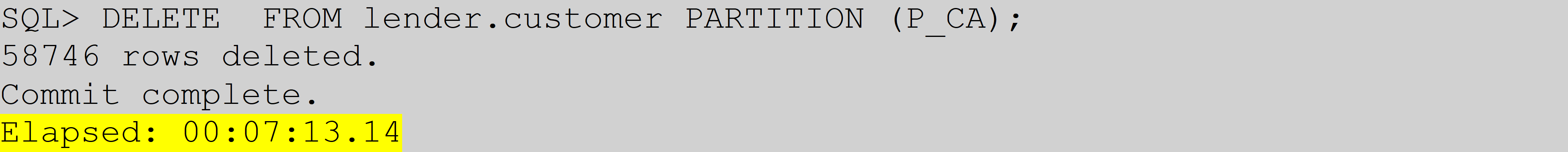

Indexing the child partitioned tables greatly improves the DELETE performance, as the timing data below shows:

Before creating the indexes

After creating the indexes

Conclusion:

When performing mass partitioned DELETEs, do not lose sight of dependent tables with partitions and associated referential integrity. You will need to create the associated indexes to maximize performance because these constraints do not inherently come with indexes. Following these steps can help solve your performance issues and improve your approach to performance tuning.